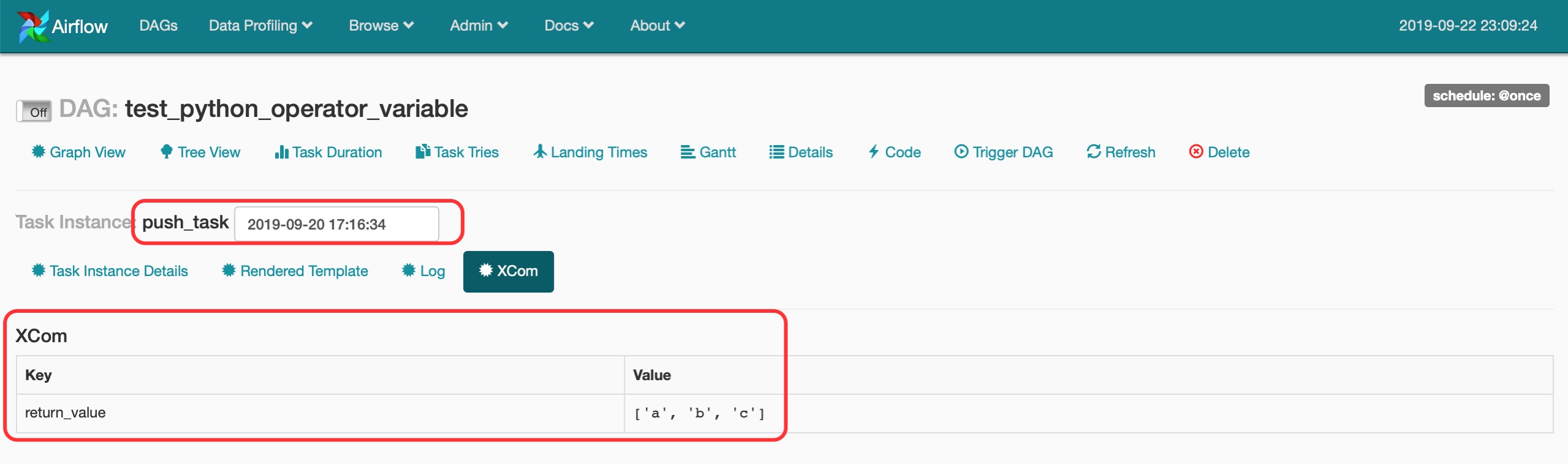

The data platform offers many operators, new ones being added continuously. Stackable makes it easy to operate data applications in any Kubernetes cluster. This operator is written and maintained by Stackable and it is part of a larger data platform. If you have a question about the Stackable Data Platform contact us via our homepage or ask a public questions in our Discussions forum. The documentation for all Stackable products can be found at. If you are interested in the most recent state of this repository, check out the nightly docs instead. The stable documentation for this operator can be found here. Read on to get started with it, or see it in action in one of our demos. You can install the operator using stackablectl or helm. Based on Kubernetes, it runs everywhere – on prem or in the cloud. It is part of the Stackable Data Platform, a curated selection of the best open source data apps like Kafka, Druid, Trino or Spark, all working together seamlessly. This is a Kubernetes operator to manage Apache Airflow ensembles. (stackable) ➜ airflow-operator git:(feat/nightly-suite) ✗ beku -s smoke-latestĭocumentation | Stackable Data Platform | Platform Docs | Discussions | Discord (stackable) ➜ airflow-operator git:(feat/nightly-suite) ✗ beku -s openshift (stackable) ➜ airflow-operator git:(feat/nightly-suite) ✗ beku -s nightly For more information about callbacks, you can refer to these airflow official documentation and stack thread.Nightly test suite ( #296 ) Part of stackabletech/ci#62 In the above code, I passed one argument to the function instead of keyword arguments and the function worked as expected. T2 = BigQueryOperator( task_id='RunLoadJobWhenSourceModified', sql='select * from ', use_legacy_sql=False,on_success_callback=my_task_function) T3=BashOperator(task_id="secound",bash_command='echo end') T1=BashOperator(task_id="first",bash_command='echo starting') If name in ('task1'): #If condition is used for PythonOperator Ti.xcom_push(key='task2_task_id', value=task_id)ĭef task3(ti,dag_id, task_id, run_id, task_state): Ti.xcom_push(key='task2_run_id', value=run_id) Ti.xcom_push(key='task2_job_id', value=job_id)

#task_status = ti.status # Pass the extracted values to the next task using XCom Xvc = client.query(sql_str1,job_config=job_config).to_dataframe().values.tolist() def task2(ti, project):Ĭlient = bigquery.Client(project=bq_project) I am unable to make use of this object for fetching the task level details of a BigQueryOperator.Īpproach 1: Tried xcom_push and xcom_pull to fetch the details from task instance(ti). To my understanding, context object can be used to fetch these details as it is a dictionary that contains various attributes and metadata related to the current task execution. job_id, task_id, run_id, state of a task and url of the tasks. In Task3, I require to fetch the task level information of the previous tasks(Task 1, Task 2) i.e. Task3 is triggered after Task1 and Task2.

I have a use case wherein we have 3 tasks Task1(BigqueryOperator),Task2(PythonOperator) and Task3(PythonOperator).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed